Loading…

Data-Driven Capacity Planning for ISPs

By weirdtoo·February 23, 2026·8 min read

AI forecasting (LSTM) boosts ISP capacity planning—improving accuracy, cutting costs, and preventing peak-hour congestion with real-time monitoring and automation.

Data-Driven Capacity Planning for ISPs

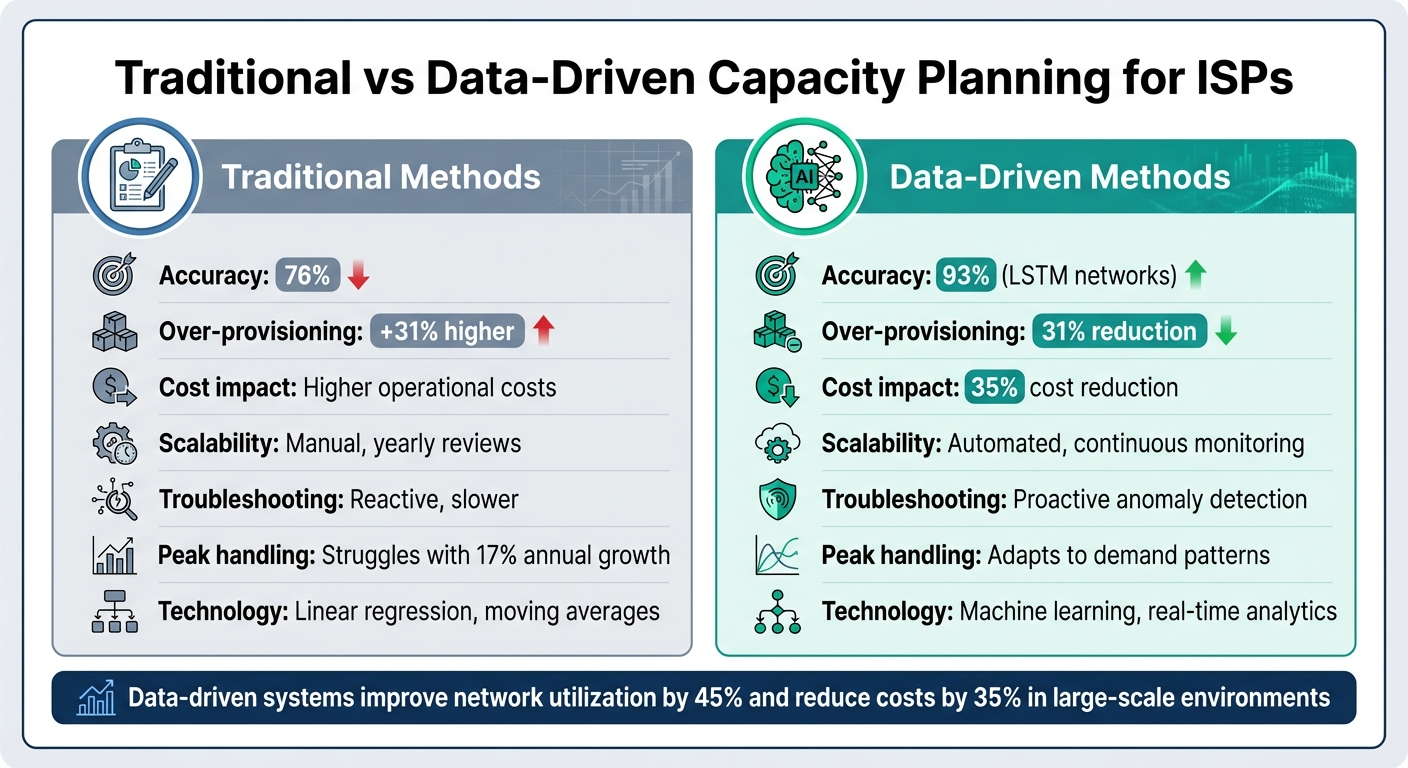

Capacity planning for ISPs is about balancing network resources to meet user demand without overspending or causing congestion. Traditional methods rely on historical data and basic statistical models but often fall short during sudden demand shifts. Newer, AI-powered approaches use machine learning to predict demand more accurately, cutting costs and improving efficiency.

Key Points:

-

Traditional Methods:

- Use historical data with techniques like linear regression.

- Achieve ~76% accuracy but struggle with sudden traffic changes.

- Lead to over-provisioning by 31%, increasing costs.

- Require manual reviews, making them less scalable.

-

Data-Driven Methods:

- Leverage AI models like LSTM for 93% accuracy.

- Reduce over-provisioning by 31% and costs by 35%.

- Offer real-time monitoring and automated scalability.

- Handle peak usage better by analyzing granular data.

Quick Insight: ISPs with growing networks or dynamic traffic patterns benefit most from AI-driven systems, which predict demand more precisely and optimize resource use. Smaller, stable ISPs may find traditional methods sufficient but less efficient.

Traditional vs Data-Driven ISP Capacity Planning: Performance Comparison

AI for Networks: Capacity Planning

sbb-itb-342b8b2

1. Traditional Capacity Planning

Traditional capacity planning relies on statistical techniques to predict future network needs by analyzing past performance. Internet Service Providers (ISPs) often use methods like linear regression, moving averages, and seasonal trending to forecast demand based on historical data [2]. A key data source for these forecasts is interface byte counters linked to subscriber identifiers, which are typically tracked in 15-minute intervals over several months [4].

Accuracy

Traditional approaches achieve around 76% accuracy when predicting workloads with variability [1]. However, they struggle to account for sudden, non-linear shifts in demand. Many ISPs also rely on monthly heuristics, such as total traffic over a month, to estimate peak usage. While this might seem practical, it often fails to accurately represent congestion during peak hours [4]. Research on seven major U.S. ISPs revealed that aggregate interconnect utilization during peak periods was only about 50% [3]. This suggests that providers maintain a significant buffer of spare capacity to offset inaccuracies in forecasting, though this practice drives up costs through over-provisioning.

Cost Efficiency

Manual capacity planning is labor-intensive and contributes to higher operational costs [5]. Because traditional forecasting methods are less precise, ISPs often over-provision their networks to ensure performance during peak demand. On the flip side, underestimating demand can result in hefty, unexpected bills from cloud providers during traffic surges [2].

Scalability

As networks grow, manual planning methods struggle to keep up [5]. Traditional statistical models aren’t equipped to handle the dynamic resource demands of modern distributed systems. This challenge becomes even more pronounced as networks span multiple ISP backbones and cloud infrastructures, where operators have less direct control [2]. The transition from voice to data services has made capacity planning far more complex, requiring ISPs to manage diverse applications and rapidly increasing traffic volumes [8]. These scalability challenges often lead to service delays, especially during peak periods.

Troubleshooting Speed

Traditional capacity planning also impacts operational responsiveness. It relies on lagging indicators and lacks automated tools for detecting anomalies or pinpointing root causes [2][6]. For instance, a WAN link operating at 90% capacity might trigger an alert, but without application-level insights, network teams can’t determine whether the traffic involves critical real-time data or less urgent backups [2]. This lack of detailed visibility delays troubleshooting, forcing teams to manually investigate congestion issues.

Peak Usage Handling

Predicting peak busy hour demands is another area where traditional methods fall short. When demand surpasses supply, networks experience "flat topping", where daily usage graphs form plateaus instead of curves [7]. Internet peak busy hour load per subscriber grows at an annual rate of 17%, while monthly per-subscriber data transfer grows at about 25% annually [7]. This widening gap between peak load and overall usage is something traditional forecasting methods are ill-equipped to address.

2. Data-Driven Capacity Planning

Data-driven capacity planning uses AI to predict network demand with a level of accuracy that goes beyond traditional statistical methods. Instead of relying on averages, these systems leverage advanced algorithms like Long Short-Term Memory (LSTM) networks to identify complex, non-linear traffic patterns. For example, LSTM models achieve a prediction accuracy of 93%, compared to 76% for older methods [1].

Accuracy

Traditional methods often fall short in capturing the full picture of network demand. AI-driven models, however, bring a new level of precision. Take Microsoft Azure’s deployment at Haaga-Helia University of Applied Sciences in November 2024 - it’s a great example of what’s possible. By using AI-powered capacity planning, they reduced per-student costs by 35% and increased system utilization by 64% [1]. These systems also incorporate outlier detection to avoid skewing predictions with irregular data, like faulty sensor readings or rare events [9].

"The real differentiator isn't choosing between statistical extrapolation and a sophisticated ML algorithm. It's about having access to the right data: granular, real-time network performance metrics deeply correlated with application behavior and user experience." – Yann Guernion, Product Marketing Manager, Broadcom Inc. [2]

Cost Efficiency

AI-based systems excel at cutting unnecessary costs. By reducing resource over-provisioning by 31% compared to traditional methods, they ensure upgrades are made where they’ll have the biggest impact [1]. For wireless ISPs, these models can pinpoint customers with poor signal quality who consume excessive airtime - sometimes more than half of an access point’s capacity during peak hours. This insight helps avoid costly spectrum purchases by addressing inefficiencies instead [7].

Scalability

As networks grow, manual capacity planning often creates bottlenecks. Automated systems eliminate these hurdles by scaling effortlessly. Features like virtualization and cloud bursting allow ISPs to adjust resources dynamically in response to real-time demand [10]. Tools for what-if scenario modeling also empower ISPs to anticipate network behavior under various conditions - such as subscriber growth or new services - before committing to major investments [11].

Troubleshooting Speed

Instead of waiting for performance issues to surface, data-driven systems take a proactive approach. Real-time monitoring and anomaly detection streamline troubleshooting by identifying early warning signs, such as bandwidth surges or subtle latency increases. These insights enable faster interventions, reducing the impact on user experience [2].

Peak Usage Handling

Managing peak demand effectively requires more than guesswork. By analyzing high-granularity data - like 15-minute intervals - and considering variables such as time, application type, and geography, AI-driven models refine demand forecasts. This precision helps ISPs make smarter decisions about capacity upgrades, ensuring investments align with actual usage patterns rather than rough estimates [1][2][4].

Advantages and Disadvantages

Each approach comes with its own set of trade-offs for ISPs. Traditional capacity planning is straightforward and familiar, often relying on spreadsheets and not requiring specialized skills. However, this simplicity can become a limitation when networks face rapid growth or unpredictable traffic patterns.

On the other hand, data-driven systems bring improved accuracy and cost savings. For example, they can reduce resource over-provisioning by 31%, improve network utilization by 45%, and cut costs by 35% in large-scale environments - all of which lower operational expenses directly [1]. These systems also help identify problematic subscribers, such as those with poor signal quality who consume disproportionate airtime - potentially saving ISPs from unnecessary spectrum purchases [7]. This efficiency becomes even more critical as networks scale to meet growing demand.

The scalability difference between these methods is striking. Traditional planning often involves manual reviews conducted annually, which can create bottlenecks as networks expand [7]. In contrast, automated systems continuously monitor and detect upgrade opportunities without requiring human intervention [6].

| Feature | Traditional Methods | Data-Driven Methods |

|---|---|---|

| Accuracy | 76% in variable workloads [1] | 93% using LSTM networks [1] |

| Cost Efficiency | Higher over-provisioning by 31% [1] | 35% cost reduction in large setups [1] |

| Scalability | Manual, yearly reviews [7] | Automated, continuous monitoring [6] |

| Troubleshooting | Reactive, slower diagnosis [7] | Proactive anomaly detection [2] |

| Peak Usage Handling | Static formulas struggle with growth [7] | Predictive modeling adapts to 17% annual increases [7] |

These comparisons highlight the strengths and weaknesses of each method, helping ISPs decide which fits their operational needs. Smaller ISPs with stable subscriber bases may find traditional methods adequate. However, those dealing with rapid growth or serving diverse communities - like WEIRDTOO LLC, which focuses on underserved areas - stand to gain significantly from the precision and scalability of data-driven planning, particularly during high-demand periods.

Conclusion

The comparison above highlights the clear gap between traditional planning methods and the benefits of a data-driven approach. For instance, LSTM-based models achieve an impressive 93% prediction accuracy, significantly outperforming the 76% accuracy of conventional approaches. Additionally, these models reduce resource over-provisioning by 31% - a game-changer for efficiency and cost management [1].

Making the leap to data-driven planning hinges on having robust, real-time monitoring in place. Yann Guernion from Broadcom sums it up perfectly:

The real differentiator isn't choosing between statistical extrapolation and a sophisticated ML algorithm. It's about having access to the right data: granular, real-time network performance metrics deeply correlated with application behavior and user experience [2].

Without high-quality data, even the most advanced AI models will fail to deliver meaningful results.

Peak usage periods require special focus. ISPs must monitor for "flat topping" and maintain sufficient capacity buffers to handle unexpected traffic spikes without compromising service quality [5][7][12]. Multi-step forecasting models are particularly useful here, offering the lead time needed for proactive network adjustments [9].

For providers serving underserved areas, such as WEIRDTOO LLC, data-driven planning uncovers hidden demand patterns and ensures resources are allocated efficiently. In educational deployments, this approach has already led to a 35% reduction in per-student costs [1].

The days of reactive planning are over. ISPs relying solely on spreadsheets and annual reviews will find it increasingly difficult to meet modern network demands. By embracing automation and machine learning, providers can better manage growth, cut costs, and maintain consistent performance during peak hours - moments that define the overall user experience. These insights offer a clear roadmap for ISPs ready to adopt smarter, forward-thinking strategies in today’s fast-evolving network environment.

FAQs

What data is needed for AI-based capacity planning?

AI-driven capacity planning hinges on the use of real-time network performance metrics - things like bandwidth usage, latency, jitter, and packet loss. These metrics, paired with historical data, play a crucial role in spotting demand patterns and seasonal trends. Machine learning models, such as LSTM neural networks, leverage this historical traffic data to improve predictions and fine-tune resource allocation. By blending real-time insights with past data, organizations can better anticipate network demands and take a proactive approach to managing capacity.

How do I choose the right forecasting model for my network?

To choose the right forecasting model, start by examining your data. Look at factors like variability, seasonal trends, and how far into the future you need to predict. For data with irregular traffic patterns, AI-powered models like LSTM can handle the unpredictability well. On the other hand, traditional methods such as time series analysis are better suited for stable and consistent patterns.

If you're dealing with complex networks or systems, AI models tend to perform better because they can adjust to changing demands more effectively. It's a good idea to test multiple methods to figure out which one delivers the most accurate and dependable results for your specific situation.

How can I forecast peak-hour demand without overbuilding?

To predict peak-hour demand accurately, rely on data-driven approaches. Dive into metrics such as bandwidth usage, latency, and subscriber behavior to uncover trends and seasonal variations. Tools like time series analysis or machine learning models can help forecast future traffic requirements. Automating this process ensures more accurate capacity planning, helping you balance resource allocation without overprovisioning.

Done-for-you content

Want content like this written for your business every month?

AI Content Studio — blog posts, social captions & newsletters from $297/mo.